TheRails

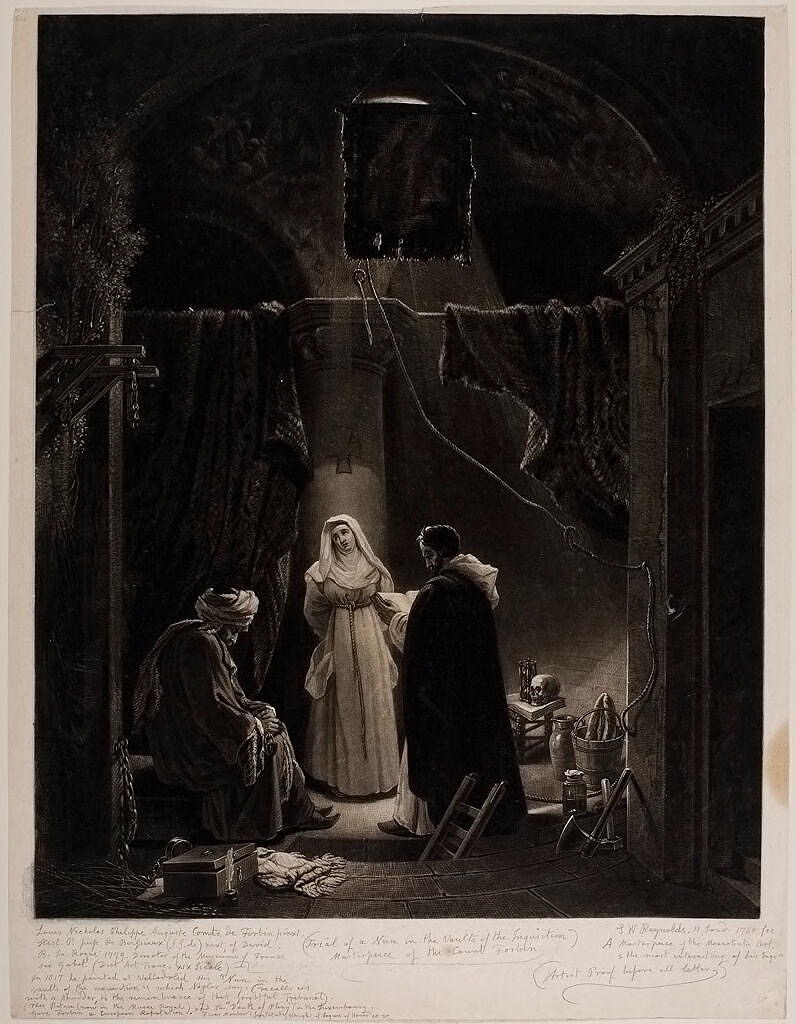

Samuel William Reynolds I:

Trial of a Nun in the Vaults of the Inquisition (19th century)

"It was never my intention to become a tester for Unscrolling technology."

It might be true that all technology comes to market before either the technology or the markets are truly ready. Without some experience, limits cannot be knowledgeably drawn. Without a few failures, edges aren’t obvious. The early adopters take much of the brunt, buffered by a blythe ignorance that they’re exposing themselves to experiences nobody could have possibly imagined. The more complex the context, the more this principle seems to hold. Boeing can’t seem to release an airplane that reliably flies the first few times. I long ago resigned myself to duck and cover whenever a new release was announced, for it has become certain that even “necessary security and reliability” updates will inevitably end up degrading some functions. We seem to have achieved levels of complexity that render us incapable of properly testing anything before releasing it on a justifiably wary and suspecting public.

The landmark social media case in LA got around to the plaintiff’s testimony this week. She described how she so imprinted on likes, comments, and subscribers that she’d panic in anticipation. She described how she’d panic even worse when her mother threatened to take away her smartphone. She experienced iterated damned if she did and damned if she didn’t at age six. She continued her immersion into this version of Hell through her formative years. META’s attorney countered that the plaintiff’s treatment history failed to show much focus on her social media use, to which her defense countered that it was a definite contributing factor over and above her family’s divorce and abusive home environment. The plaintiff admitted she still, at twenty, struggles to limit her social media engagement time.

The Pentagon threw an embarrassing conniption this week when an AI supplier denied them permission to violate Asimov’s first rule of robotics: “A robot may not injure a human being or, through inaction, allow a human being to come to harm”. The Pentagon had proposed using its leased AI engine, Claude, to inform autonomous drones, a definite no-no in the robotics field. The Incumbent lashed out as only the truly ignorant can lash out, accusing the AI company of being WOKE, whatever that might mean, and lefty-liberal, which doesn’t seem like that effective of a criticism. An AI engine could have easily generated better! The administration, incapable of administering anything, pronounced that any government contractor using that AI would lose their contract and could even be fined. There are no other AI engines capable of replacing this one, though Elon Musk has one that’s proven to be capable of artificially undressing children. He’s offered his without restrictions. This amounts to offering to loan Wylie Coyote another anvil. Nobody will be surprised when the DoD accepts the offer, and as a result, autonomous drones start massacring their former masters. Whoopsie!

We can never be fully capable of declaring when and where our technology will go off TheRails. TheRails seem an emergent property, necessarily undefined for the longest time. As soon as an edge gets identified, a new release commences, and the fresh fences no longer define the border. The cutting edge of science might insist, but only ever tentatively, pending fresh experiences and discoveries. We never know before. We would be wise to be wary, but we only rarely agree to take our technology slow and easy. We expect instantaneous results and surprise ourselves when a door blows off on a virgin flight. The AI company turning its back on hundreds of millions in DoD contracts to maintain its ethics might be almost unprecedented. We more often launch the ship before we’ve finished testing and experience genuine shock and surprise when it somehow sinks itself on its maiden voyage.

Because we are not natively cautious, we might be wise to stay a few releases behind whatever’s defined as current. Let the early adopters absorb the first lessons. Even though the science shows that not even most six-year-olds ever experience genuine social media addiction, we’re wise to show caution. Even for ourselves. I read of one social media user who bought a safe he didn’t know the combination for. He’d secure his smartphone in that box for several hours each day so he could stay away from his feeds. I understand there’s an app for that now. I’m actively avoiding signing up for these inventions. It was never my intention to become a tester for Unscrolling technology, just to try to keep myself from going off TheRails, wherever they might be.

©2026 by David A. Schmaltz - all rights reserved